AI is far from the science fiction vision. But generative pre-trained transformer (GPT) AIs like ChatGPT are now mainstream. GPT processes a tremendous amount of existing text, essentially reading millions of articles and books, “learning” how to generate human-like writing.

For an example of how it works and how far into general awareness the tech has come, watch this ad for Mint Mobile, read by Ryan Reynolds. The chat app was asked to “Write a commercial for Mint Mobil in the voice of Ryan Reynolds. Use a joke, a curse word, and [marketing messaging the company wanted in the ad no matter what the AI generated].”

The above is an ad that uses a gimmicky tech tool to tie into a news cycle because a celebrity is involved. Reynolds’ description of how ChatGPT works from the end user’s perspective is spot on, despite the normal advertising behind the scenes that is not described.

My digi-pal, Brandi D. Addison, asked ChatGPT to write an article in her style. It was an incredibly good impression. Addison is a professional reporter, and ChatGPT likely had been trained with hundreds of articles under her byline.

While she’s a better writer than an AI’. I’m also not an executive at a media company trying to raise revenue and cut costs. Meaning, I don’t have my dollar sign beer goggles on.

The folks at Red Ventures, who took ownership of CNET in 2020, seem to have the dollar sign beer goggles on because they are dancing on an AI table.

Without writing the history of CNET from 1994 to now, let’s just say that CNET was about as credible as you could get in the tech media for a long time. Since 2020 when Red Ventures took ownership of the brand, CNET has published entire articles generated by AI.

To my knowledge, no one has stated that CNET was using ChatGPT. According to The Verge, CNET used at least one AI called Wordsmith. But what content is AI written and how it is generated is largely unknown to most CNET editorial staff. One thing that is almost guaranteed the AI’s CNET is using are generative pre-trained transformers.

Current generation GPT technology is a party trick, like juggling. Like juggling, most of your friends will be slightly impressed the first time they see it but less so each subsequent time. Trust me on this.

My friend and fellow Juggler Christopher Gronlund recently pointed out that when prompted to write fiction AI repeats a lot of the same ideas. Saying it’s like a five-year-old kid who plays certain music well but without much feeling.

With fiction, at least AI cannot be wrong, as it often is when answering non-fiction questions.

CNETs AI-generated articles, as with so many GPT articles, are subject to being confidently wrong. Some of CNET’s AI-generated articles were clearly and irresponsibly wrong. The errors were obvious but also appeared in articles targeting less topically savvy people attempting to learn.

I’ve long held that human reliance on AI is creating something godlike and incredibly stupid. AI’s are used to approve loans, determine what content people see online, and who gets bail.

AI from Google is already ranking AI-generated CNET articles linking to credit card applications that use AI to decide who is approved. Without a user needing to know, AI is involved at all.

Removing the obfuscation and asking an AI questions feels like asking an oracle. It creates an expectation of something definitive and correct that a search engine doesn’t. A search engine feels like searching. AI feels like an answer.

The AI’s answers, even to factual questions, can be wrong. Can Duruk from Margins pointed out of ChatGPT, It will tell you Ankara is the capital of Turkey if you ask for the capital. But the AI will confirm that Istanbul is the capital if you ask.

I was going to make a joke here about asking Alex Cranz to comment for an article titled ‘I Sent The Verges Managing Editor a Pitch for AI Writing Software’. But in light of the layoffs at Vox Media, I’d rather not joke about an editor at The Verge using AI to replace writers at all.

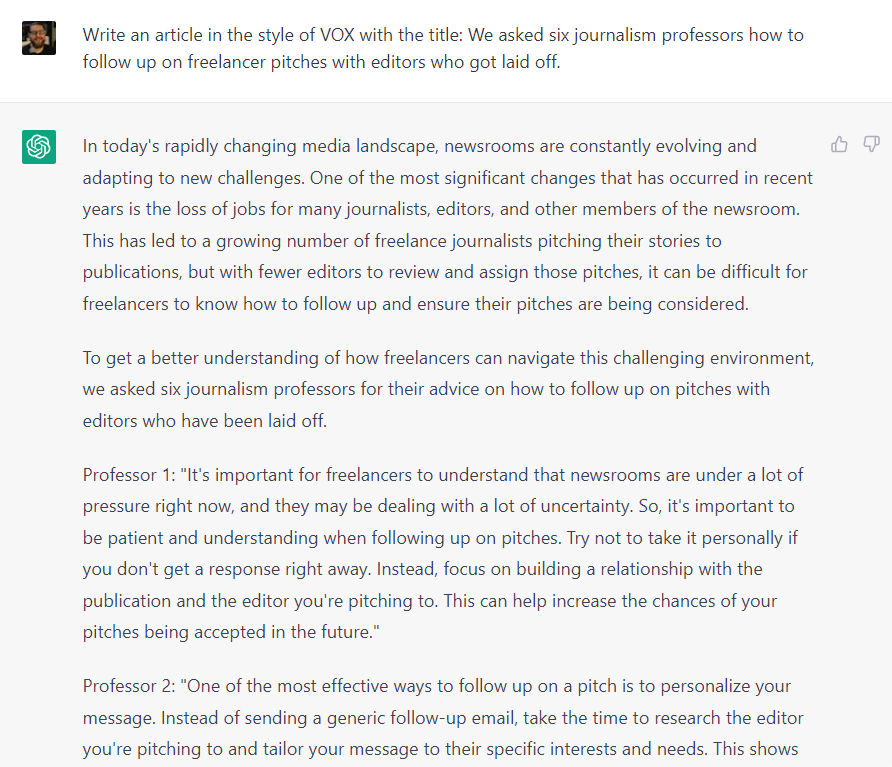

So I asked an AI to write an article in the style of VOX with the title: We asked six journalism professors how to follow up on freelancer pitches with editors who got laid off. Here’s what the AI said:

The AI was happy to advise as if it were six journalism professors. The problem is the advice is for pitching editors who got laid off; you don’t pitch them at all. But ChatGPT wrote a neat little conclusion that completely ignores that.

In conclusion, freelancers are facing a challenging environment when it comes to following up on pitches with editors who have been laid off. However, by being patient, understanding, and persistent, freelancers can increase the chances of their pitches being accepted. By building a relationship with the publication and the editor, personalizing your message, offering multiple story ideas, being flexible and willing to negotiate, offering additional resources or support and following up persistence will help freelancers navigate this challenging environment.

Let me conclude by saying don’t harass recently laid off editors with pitches for the publication they once worked.

Article by Mason Pelt of Push ROI. First published in MasonPelt.com on January 23, 2023. Photo by Andrea De Santis on Unsplash.